There's a common saying, "skate to where the puck is going to be", which essentially means that you should use foresight rather than reacting to past events. However, it implies that you know where the puck will be. AI hype makes it a challenge to know "where will the puck be" due to the amount of noise in the market. At Sentrix, we're seeing clear trends emerge. Fortunately, these trends are a continuation of prior trends which makes them easier to understand.

Software Development is starting to look more like an extended CI/CD pipeline.

Let's step back in time before automated CI/CD pipelines. Long before CI/CD pipelines and AI, the Software Development Lifecycle (SDLC) had build and release processes. These processes were handled by specialized Build Engineers. If you remember this era, then you also recall how integration was a persistent problem because bugs were discovered late in the process.

Now, let's jump forward a bit to the initial spread of CI/CD into engineering teams. At first, there was widespread skepticism. The concerns raised were "an automated process cannot catch bugs as effectively as manual QA", "tests are not deterministic enough to be relied upon", "automating deployments would just push more defects faster". Sound familiar?

The excuses against CI/CD are similar to those used against AI, which essentially boil down to quality concerns.

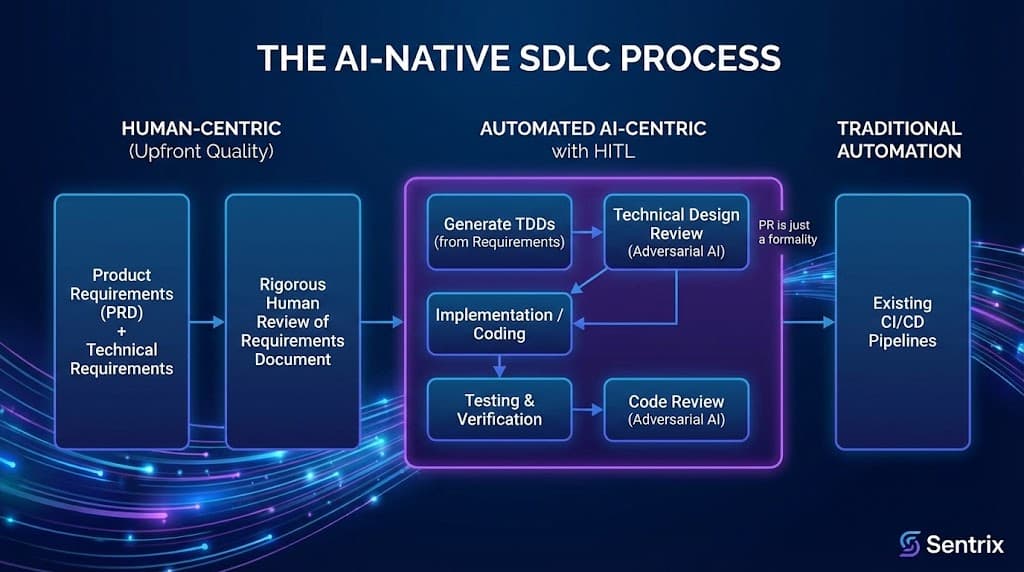

So, with this historical perspective in mind, we can now discuss where the puck is going to be. The AI-native SDLC is going to look like an extended CI/CD pipeline, except that the development phase will also be automated, so it'll be a CD/CI/CD pipeline, or Continuous Development / Continuous Integration / Continuous Deployment.

Crazy? Not really. Quality will still exist, it will simply shift further left from the PR to the requirements review phase.

If we look at a mature SDLC within an enterprise (and we squint a little) we can see there is already a significant security gate in the technical design review. In a mature process, the Technical Design Review, or just Design Review, is where an engineer presents their technical designs to the principal, staff and senior engineers, plus the SRE/platform engineers, the InfoSec team, and so on. Usually, this process is brutal, in a good way. The design review meeting is where quality is baked into the product at an architecture and design level. Then, much later in the process, a pull request (PR) is the phase where the code (which presumably conforms to the technical design) is reviewed.

So how does this change with an AI-native SDLC? In an AI-native SDLC quality shifts left.

AI-native SDLC which is deliberately simplified:

- Human-centric portions of the process

- Product requirements (PRD) + technical requirements = Requirements document

- Rigorous human review of the Requirements document

- Automated AI-centric with HITL (as necessary)

- Generate Technical Design Documents (TDDs) based on Requirements document

- Technical design review - adversarial AI

- Implementation / coding

- Testing and verification

- Code review - adversarial AI

- PR is just a formality

- Traditional automation

- Existing CI/CD pipelines

What has not changed is that engineers maintain quality controls. What has changed is that those controls have shifted left to reviewing the requirements document. The remainder of the process is largely automated with AI and tooling, where the automation can be interrupted with Human in the Loop (HITL) when necessary.